Score (statistics)

In statistics, the score, score function, efficient score[1] or informant[2] plays an important role in several aspects of inference. For example:

-

- in formulating a test statistic for a locally most powerful test;[3]

- in approximating the error in a maximum likelihood estimate;[4]

- in demonstrating the asymptotic sufficiency of a maximum likelihood estimate;[4]

- in the formulation of confidence intervals;[5]

- in demonstrations of the Cramér–Rao inequality.[6]

The score function also plays an important role in computational statistics, as it can play a part in the computation of maximum likelihood estimates.

Contents |

Definition

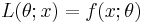

The score or efficient score [1] is the gradient (the vector of partial derivatives), with respect to some parameter  , of the logarithm (commonly the natural logarithm) of the likelihood function. If the observation is

, of the logarithm (commonly the natural logarithm) of the likelihood function. If the observation is  and its likelihood is

and its likelihood is  , then the score

, then the score  can be found through the chain rule:

can be found through the chain rule:

Thus the score  indicates the sensitivity of

indicates the sensitivity of  (its derivative normalized by its value). Note that

(its derivative normalized by its value). Note that  is a function of

is a function of  and the observation

and the observation  , so that, in general, it is not a statistic. However in certain applications, such as the score test, the score is evaluated at a specific value of

, so that, in general, it is not a statistic. However in certain applications, such as the score test, the score is evaluated at a specific value of  (such as a null-hypothesis value, or at the maximum likelihood estimate of

(such as a null-hypothesis value, or at the maximum likelihood estimate of  ), in which case the result is a statistic.

), in which case the result is a statistic.

Properties

Mean

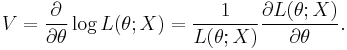

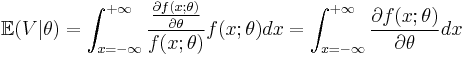

Under some regularity conditions, the expected value of  with respect to the observation

with respect to the observation  , given

, given  , written

, written  , is zero. To see this rewrite the likelihood function

, is zero. To see this rewrite the likelihood function  as a probability density function,

as a probability density function,

If certain differentiability conditions are met, the integral may be rewritten as

It is worth restating the above result in words: the expected value of the score is zero. Thus, if one were to repeatedly sample from some distribution, and repeatedly calculate the score, then the mean value of the scores would tend to zero as the number of repeat samples approached infinity.

Variance

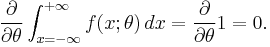

The variance of the score is known as the Fisher information and is written  . Because the expectation of the score is zero, this may be written as

. Because the expectation of the score is zero, this may be written as

Note that the Fisher information, as defined above, is not a function of any particular observation, as the random variable  has been averaged out. This concept of information is useful when comparing two methods of observation of some random process.

has been averaged out. This concept of information is useful when comparing two methods of observation of some random process.

Example

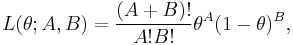

Consider a Bernoulli process, with A successes and B failures; the probability of success is θ.

Then the likelihood L is

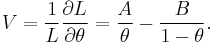

so the score V is

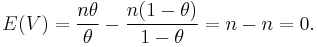

We can now verify that the expectation of the score is zero. Noting that the expectation of A is nθ and the expectation of B is n(1 − θ), we can see that the expectation of V is

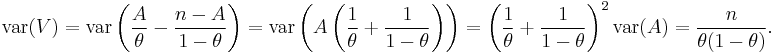

We can also check the variance of  . We know that A + B = n (so B = n - A) and the variance of A is nθ(1 − θ) so the variance of V is

. We know that A + B = n (so B = n - A) and the variance of A is nθ(1 − θ) so the variance of V is

Applications

Scoring algorithm

The scoring algorithm is an iterative method for numerically determining the maximum likelihood estimator.

Score test

See also

Notes

- ^ a b Cox & Hinkley (1974), p 107

- ^ Chentsov, N.N. (2001), "Informant", in Hazewinkel, Michiel, Encyclopedia of Mathematics, Springer, ISBN 978-1556080104, http://www.encyclopediaofmath.org/index.php?title=i/i051030

- ^ Cox & Hinkley (1974), p 113

- ^ a b Cox & Hinkley (1974), p 295

- ^ Cox & Hinkley (1974), p 222-3

- ^ Cox & Hinkley (1974), p 254

References

- Cox, D.R., Hinkley, D.V. (1974) Theoretical Statistics, Chapman & Hall. ISBN 0-412-12420-3

- Schervish, Mark J. (1995). Theory of Statistics. New York: Springer. Section 2.3.1. ISBN 0387945466.

![\mathcal{I}(\theta)

=

\mathbb{E}

\left\{\left.

\left[

\frac{\partial}{\partial\theta} \log L(\theta;X)

\right]^2

\right|\theta\right\}.](/2012-wikipedia_en_all_nopic_01_2012/I/6bcb6c7b6985d43301bc22a3437899ac.png)